Mobile Money Impact Evaluations: A Review

This piece was written in February of 2017 for CIPA’s online journal, The Cornell Policy Review. At that time, Stephanie Coker, MPA 2017, was a second-year CIPA student. Her concentration was in the area of Economic and Financial Policy. She is currently employed as a Research Associate for Monitoring and Evaluation at Tetra Tech, a leading provider of consulting, engineering, and technical services worldwide.

The 2000 Millennium Development Goals established by the United Nations provided a universally agreed upon set of objectives for all its member nations to follow. The first Goal that was identified was the elimination of poverty. Eradication of poverty subsequently became the focus of many initiatives by many organizations and governments around the world. To address some of the problems related to poverty, Mohamed Yunus started a financing scheme that would later develop into microfinance. Microfinance encompasses a set of activities that are aimed at increasing financial inclusion of sectors of the population that have traditionally been unbanked.

The World Bank Group estimates that, globally, about two billion people lack access to a transaction account. Since the spring of 2015, the World Bank Group alone has committed over $8 billion in activities that will increase financial inclusion.

Outcomes measured by social scientists are mostly positive.

Between 2011 and 2014, for instance, microfinance activities decreased the number of financially excluded individuals by 20%.[1] However, there is still ongoing debate about the true impact of microfinance activities on alleviating poverty, with some research studies finding that microfinance has no impact on poverty reduction.[2]

Mobile money has recently emerged as one of the most promising microfinance strategies. Donovan defines mobile money as the provision of financial services through a mobile. Potential advantages include benefits arising from the inherent characteristics of the services; benefits arising organically from widespread usage by individuals and among social networks; and benefits arising from purposeful and innovative applications, either made by developers or created by people’s uses of mobile money services.[3] However, the mobile money industry is still young, and many questions about it remain unanswered. Impact evaluations of mobile money activities remain scarce in comparison to impact evaluations of other microfinance activities, such as microcredit.

Impact evaluations remain the primary way of measuring the impact of policies and initiatives. The objective of an impact evaluation is to establish a pathway for causality and tests its logic. A rigorous impact evaluation can provide policy-makers and program managers with information that verifies the effectiveness of a program and improves public service delivery. The following systematic two-stage review of six impact evaluations of mobile money initiatives will shed some light on theories of change associated with mobile money, underlying assumptions needed to establish congruency of theory, counterfactuals, and causal pathways.

Impact Evaluation: Objectives and Methodological Features

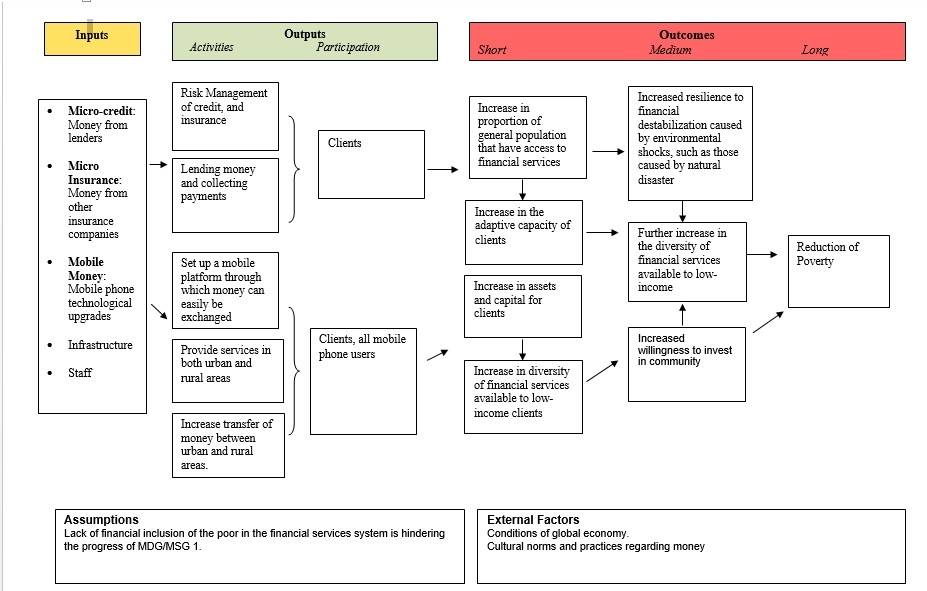

A good impact evaluation design rests on many methodological features. Establishing a valid research counterfactual and identifying a theory of change, are essential to any impact evaluation. The concept of a counterfactual, first popularized through Rubin’s Causal Model, is in this context, “what the outcome would have been for program participants if they had not participated in the program.” A theory of change is a logical sequence of events that tracks the mechanism of a program as it generates certain outcomes. Theories of change are especially important if the program/initiative is seeking to change behavior. [4] While there is not yet a consensus on what constitutes a valid microfinance theory of change, I will present a potential framework for a microfinance theory of change. In Figure 1, mobile money is included as an input in microfinance, along with microcredit and micro-insurance. Its pathway of causality is then traced to the goal of breaking the cycle of poverty through financial inclusion. As is shown, mobile money is only one facet of a whole set of microfinance services that will lead to the stated goal. This is a key fact when determining the extent of the impact mobile money activities will make on reducing poverty, because it contends that mobile money activities do not lead to significant impact unless combined with other microfinance activities. The impact of mobile money activities on consumer behavior, that is, may not by itself lead to the reduction of poverty.

Figure 1: Microfinance Logic Model

Following from this theory of change, the ideal method of estimating the counterfactual is the use of a comparison group. This group must be identical in almost every way to the group who is receiving the program benefits. The best research methodologies that produce valid comparison groups are randomized control trials (RCTs).[5] Other methodologies must use other, more complicated approaches to set up a counterfactual. In the case of mobile money activities, the comparison group can be established by sampling from both users and non-users. Nonetheless, assignment of who uses mobile money activities should be randomized for the purposes of measuring impact, to prevent selection bias. Using evidence from prior research data unrelated to the current impact evaluation study may also be a valid way to design a comparative study. However, if prior research is used, additional efforts must be made to prove that the mined data adequately represents the state of the population without the treatment, in the state of the world where both treatment and non-treatment are possible. Furthermore, evaluation methods that do not use randomization turn out to be inherently biased. For example, propensity score matching as an evaluation method faces severe limitations to its internal validity because it relies heavily on the assumption that unobserved characteristics that affect both participation and outcomes do not exist.[6] To avoid bias, the use of randomization in evaluating mobile money services is necessary.

Other methodological considerations to be examined when undertaking an impact evaluation are the sample size of the population being studied, the length of time allowed for a significant change to take place, the local contexts of each intervention, and the presence of a causal claim. Using these methodological features as tools for analysis, in the analysis that follows I will conduct a two-stage review. The first stage focuses primarily on the internal validity of each impact evaluation, while the second stage delves deeper into the counterfactuals presented, the rate of change of the intervention, and the results of the study. At the end of the first stage, I select impact evaluations of mobile money that have passed internal validity checks and use these for my second stage review. To conclude, I present recommendations for future mobile money impact evaluations, based on my second stage analysis.

First Stage Review

An extensive search was made through the following databases to identify impact evaluations of mobile money services:

- EBSCOhost

- World Bank Impact Evaluations Database

- International Initiative for Impact Evaluation database

- Abdul Latif Jameel Poverty Action Lab database

- Innovations for Poverty Action search project

- University of California Center for Effective Global Action (CEGA): Research Projects

- Asian Development Bank: Economic Research Publications

- National Bureau of Economic Research: Working Papers and Publications

- The International Growth Centre: Publications

- ECONlit database

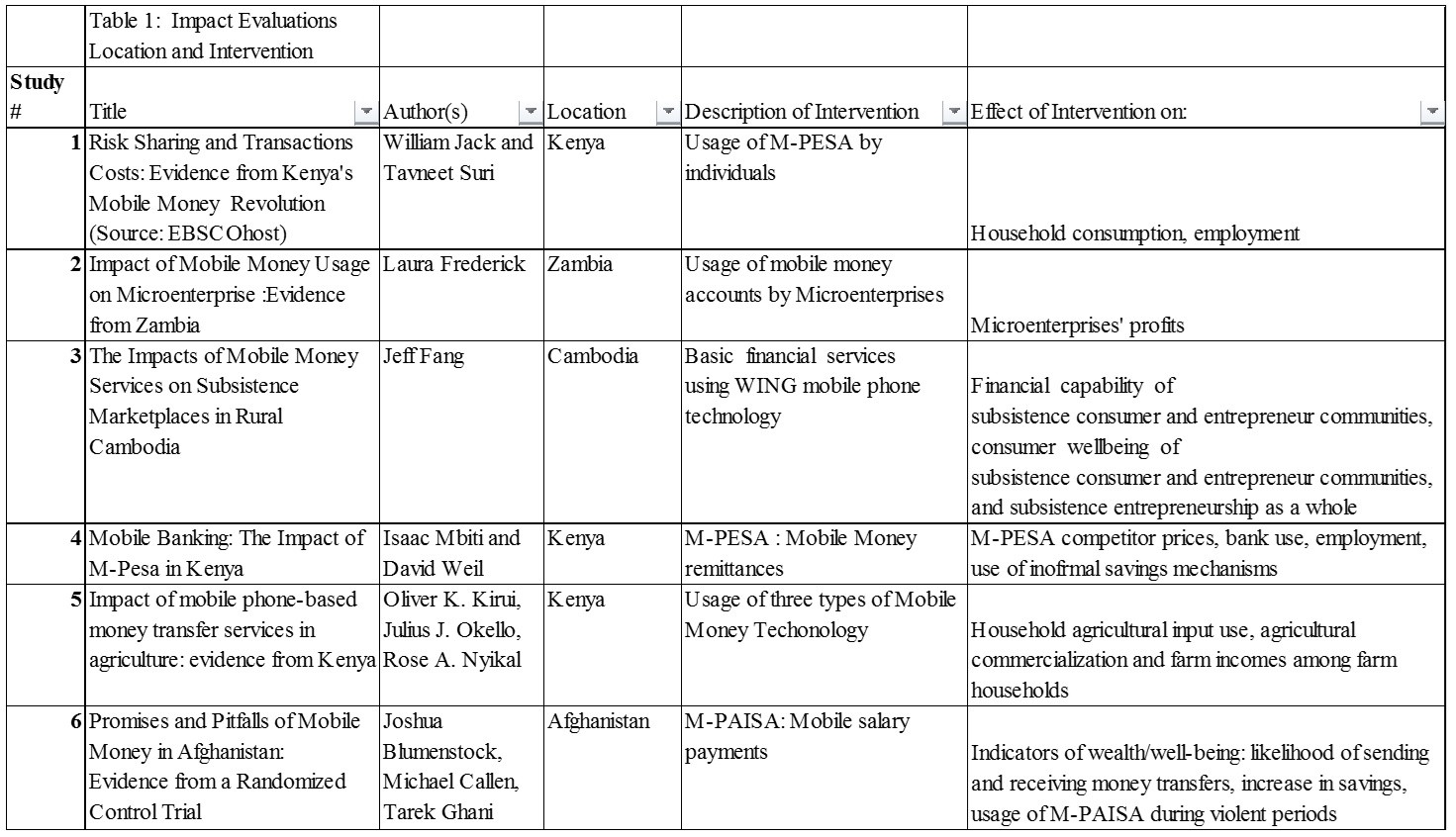

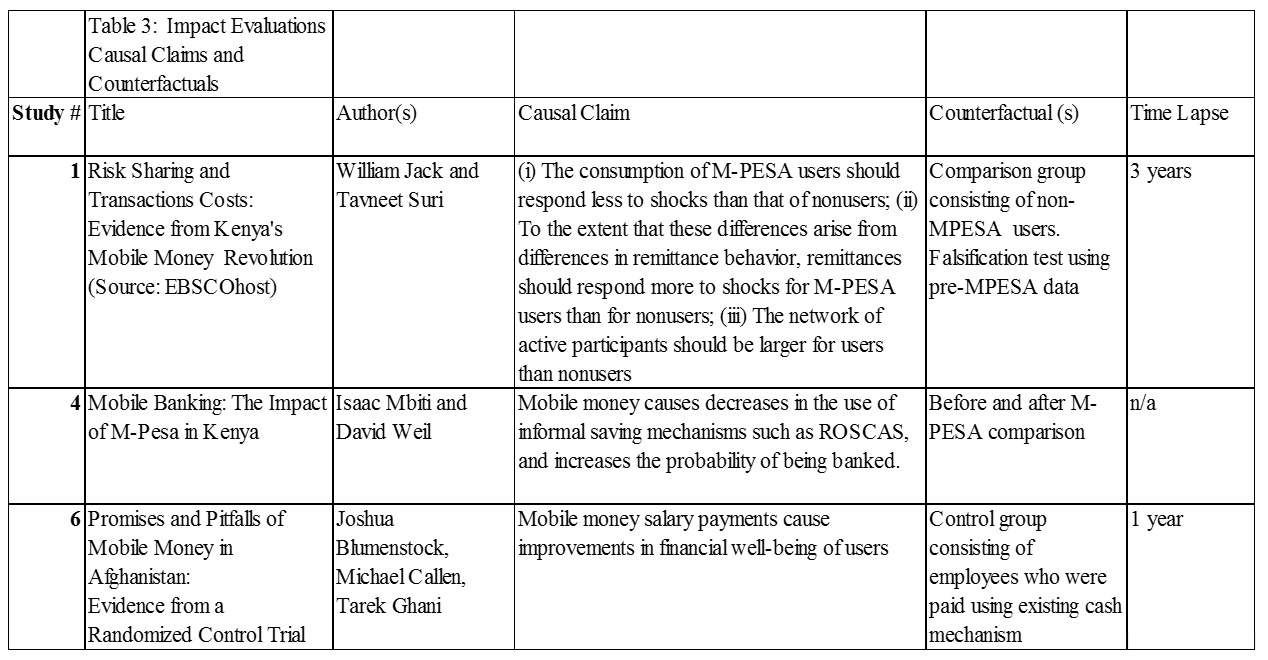

The search terms were “mobile money,” “microfinance,” and “M-PESA.” Six impact evaluations relating to mobile money were found. Criteria used to analyze impact evaluation reports are summarized in Tables 1, 2 and 3.

Table 1 Analysis

Because mobile money is a relatively new intervention, it is important to understand the reasons why it has been implemented in certain locations, why impact evaluations’ designs vary depending by location, and how impact evaluations vary by type of mobile money technology. Table 1 displays the country of implementation and a brief description of each intervention. This information is important because different methodologies are appropriate for different types of interventions; for example, impact evaluations of M-PESA are retrospective because the release of M-PESA in Kenya was not accompanied by a simultaneous impact evaluation study; furthermore, widespread uptake of M-PESA technology was only established after years of successful marketing, which delayed its evaluation. On the other hand, impact evaluations done of mobile money services in Cambodia, Afghanistan and Mozambique are prospective in nature. For retrospective impact evaluations of M-PESA, the burden of proof to establish causality is heavier without a ready-made counterfactual, and therefore researchers must compensate with more sophisticated evaluation designs or risk a loss of internal validity.

As is evidenced by the last two columns of Table 1, mobile money impact evaluations vary regarding what constitutes implementation of mobile money activities. In Studies 1, 2, 3, and 6, the usage of mobile money services is used by researchers as a measure of treatment; Study 4 uses mobile money remittances, and Study 7 uses mobile money salary payments. More importantly, each study uses different indicators to measure impact. According to the microfinance framework of change that I have outlined, outcomes should broadly relate to an increase in individual or household wealth and improved resilience to shocks[7]. All studies except Study 2 produce estimates on individual or household wealth. Although Study 2 focuses on impact on microenterprises, which creates a longer and more complex causal chain, the proliferation of microenterprises does not necessarily lead to poverty reduction; this fact therefore eliminates the need for further consideration of Study 2. I will not include Study 2 in my second stage analysis for this reason.

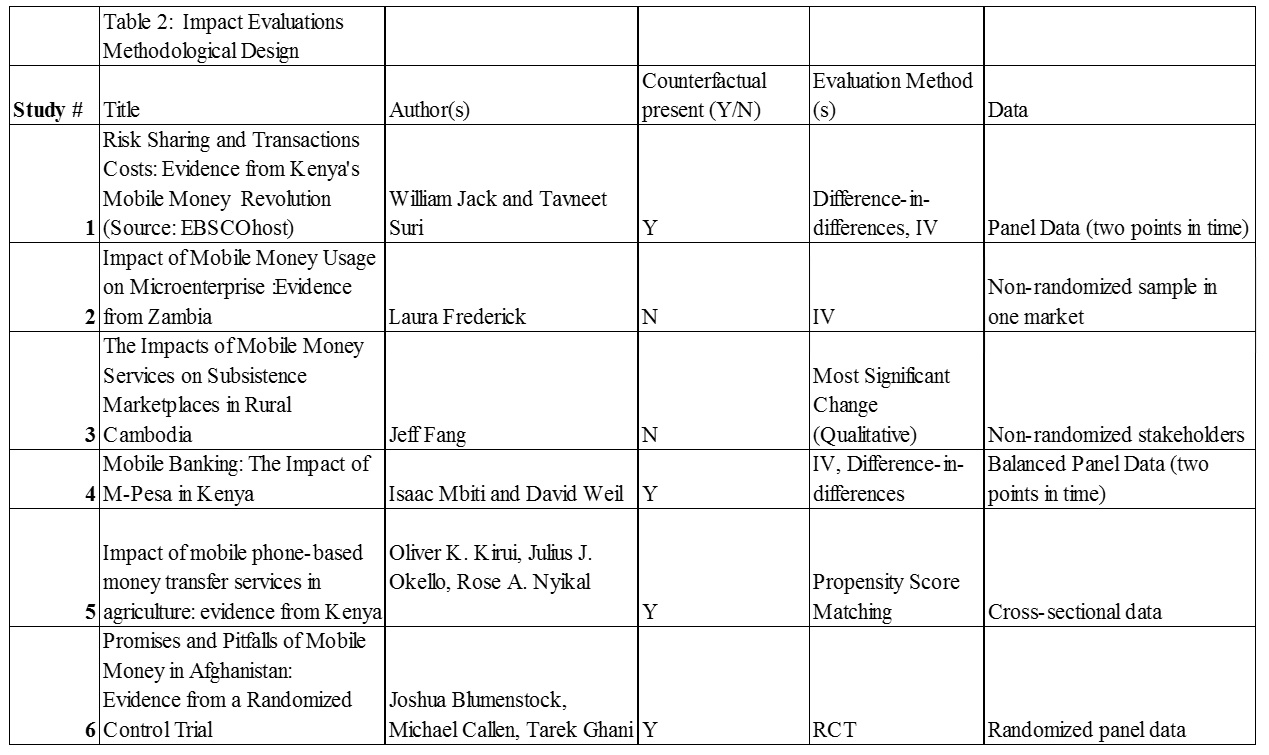

Table 2 Analysis

In Table 2, technical aspects of each impact evaluation are reviewed. These technical aspects are components of research methodological design and include evaluation method, study design and data, and presence of a counterfactual. As a counterfactual is needed to establish proof of causality, Study 3 is eliminated from the second-stage review because it does not present a stated counterfactual. Additionally, for reasons stated earlier in my Objectives and Methodological Features section, it is important that the evaluation methods use randomization. Hence, Study 7 is eliminated from my second stage review because it uses propensity score matching.

Second-Stage Review

After eliminating 3 studies, the remaining three are analyzed based on whether their evaluation answers their causal claim, whether they have valid counterfactuals, and whether they leave enough time between baseline and endline studies. Reviewing this information will reveal the logic behind what sparks economic changes for users of mobile money and if the research methodology for each study is appropriate for the logic. Results of the analyses are presented in Table 3. Right away, although Study 1 and Study 4 do have counterfactuals, they are what Gertler et al. refer to as counterfeit counterfactuals. Gertler et al. identify two ways in which counterfeit counterfactuals can be generated: before and after comparisons, and through enrolled and non-enrolled comparisons.[8] Study 1 relies on data from non-users of M-PESA and uses this data to form a comparison group. However, using data from non-users of M-PESA introduces selection bias and will bias estimates of impact. Study 4 relies on a before and after comparison of periods when M-PESA was available and not available. This method also creates biased estimates, because this comparison is based on the assumption that if M-PESA had never existed, the outcome for M-PESA users would have been exactly the same as their pre-M-PESA situation. Because of these methodological concerns, Study 1 and Study 4 are eliminated as models for future mobile money impact evaluations.

Finally, it is worthwhile to examine the time lapse of each impact evaluation because with any new program or intervention, enough time needs to pass to be able to observe changes. In Study 1, a 3-year lapse is observed by researchers, while in Study 4, an 8-year lapse is observed. In Study 6, researchers admit that a one-year time lapse may not be sufficient to study impacts of mobile money services.[9] In my conclusion and recommendation section, I present a summary of my findings and the implications of each finding. I will also make recommendations for future impact evaluations of mobile money services.

Key Findings and Recommendations

After conducting a two-stage review of studies that estimate the impact of mobile money on several indicators, it is evident that only Study 6 is conducted with a valid counterfactual, a robust evaluation method, and a random assignment. All the other studies are eliminated by other criteria. Yet Study 6 possibly still does not provide enough time for impact attributable to the mobile money program to emerge. Furthermore, the researchers of Study 6 admit that a larger sample size would aid in detecting smaller impacts. Thus, there are several implications that arise from my findings:

- The true impact of mobile money cannot be estimated with the M-PESA retrospective studies, because of the presence of counterfeit counterfactuals.

- A wide variety of valid instruments can be used to determine impact using IV analysis. Instruments used in the studies I have reviewed are household consumption, income, employment, and changes in the use of safe saving mechanisms,

- Because the demographics of users and non-users of mobile money services may vary greatly between groups, random assignment of treatment is a necessity to eliminate selection bias.

- Although qualitative impact evaluations of mobile money provide information about which indicators are best in local contexts, it is extremely difficult to claim causality without a counterfactual. This means that causal claims are difficult to verify,

- Time lapses must be a year or more to estimate income; otherwise, sample size needs to be large enough to detect minute changes in impact estimates.

The following recommendations proceed from these implications:

- Quantitative impact evaluations of mobile money activities should only be carried out if an RCT is possible. Otherwise, counterfeit counterfactuals and selection bias are present, and true impact cannot be measured.

- Yet, qualitative impact evaluations of mobile money do not present a valid method to claim causality.

- Impact evaluations of mobile money should consider mobile money as one slice of the pie that makes financial inclusion possible. Therefore, future impact estimates should be examined at higher levels of significance.

Moving forward, these recommendations will be important for implementers and evaluators as they start new mobile money schemes. By adopting these suggested strategies, more robust evidence will emerge about whether mobile money is an effective tool for poverty alleviation. A widening pool of robust evidence can then inform decision-making at the highest levels, as both the public and private sectors figure out ways in which mobile money can be most useful to society.

References

- “UFA2020 Overview: Universal Financial Access by 2020,” World Bank, accessed February 13, 2017, http://www.worldbank.org/en/topic/financialinclusion/brief/achieving-universal-financial-access-by-2020. View Resource

- Thomas W. Dichter, “Too Good to Be True: The Remarkable Resilience of Microfinance,” Harvard International Review 32, no. 1 (2010): 18–21. View Resource

- Kevin Donovan, “Mobile Money for Financial Inclusion,” 2012, 63, http://elibrary.worldbank.org/doi/abs/10.1596/9780821389911_ch04. View Resource

- Paul J. Gertler et al., Impact Evaluation in Practice (The World Bank, 2010), 8, 22, doi:10.1596/978-0-8213-8541-8. View Resource

- Ibid., 37, 38. View Resource

- Ibid., 117. View Resource

- For further discussion about resilience in international development, see Barrett and Constas (2013). “Toward a theory of resilience for international development applications”. Proceedings of the National Academy of Sciences, vol. 111 no. 40, 14625–14630. Retrieved from: doi: 10.1073/pnas.1320880111 View Resource

- Gertler et al., Impact Evaluation in Practice, 8. View Resource

- J. Blumenstock et al., “Promises and Pitfalls of Mobile Money in Afghanistan: Evidence from a Randomized Control Trial.,” 2015, 8, http://www.povertyactionlab.org/publication/violence-and-financial-decisions-evidence-mobile-money-afghanistan. View Resource

If you have questions about attending Cornell University's Institute for Public Affairs, we encourage you to request more information today!